I am the author of the "In Defense Of" series of articles, so you might not be surprised that one of my favorite things in Hearthstone is making unusual cards work.

I could talk your ear off for an hour about why it might be worth running Mogor the Ogre in a competitive deck (and I might in a future column). It would just be words, though, in the absence of practical testing. In an effort to make sure that the articles I publish are reporting more than just what I happened to get lucky with last week, I've taken some baby steps toward the holy grail of statistics-based deckbuilding. I want to share some of the lessons I've learned about just how difficult this quest is.

Please note that I have no formal background in statistics. I am acutely aware that I may not be using the best techniques. My hope is simply to show my work so that others may build on it or throw it away. Either way, more conversation and more statistics can only be good for the game.

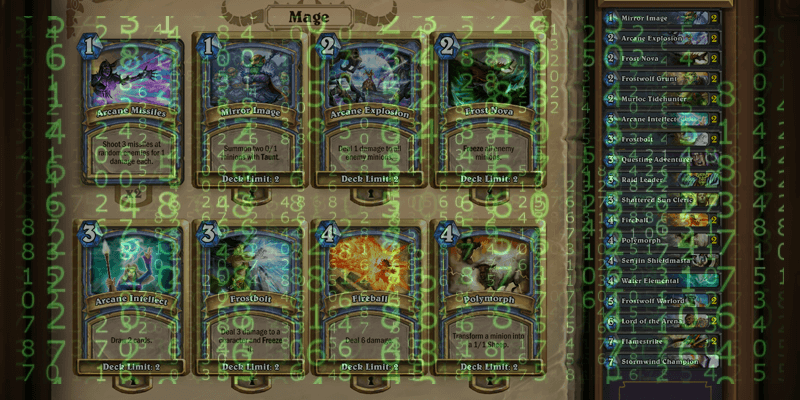

Win Rate By Card

Everything in competitive Hearthstone comes down to one ultimate question: does it win? For a deck, we measure that by the win rate, whether overall or in specific matchups. For a card, it is a bit more complicated, because we have to ask the question within the context of a specific deck. We aren't interested simply in the win rate of the deck, but in how that card affects the win rate of the deck. Does the deck win more or fewer games because that card is in the deck?

The most obvious way to gather data on this type of question is to test two different versions of a deck, which differ by only one card. This approach is impractical, simply because of the number of games required for reliable data. As modernleper pointed out in his article about win percentages, even data sets with 50 to 100 games can show significant variance, especially given the selection bias inherent in publishing results. To gather reliable data on a representative sample of matchups for multiple versions of a deck would require playing hundreds of games within a short time period — daunting for the most prolific players. Even if such an experiment were carried out, it would only address the relative merits of two possible cards in a specific deck in a specific meta. Little more than a drop in the sea.

Since in the real world of Hearthstone we all build decks and test them with many variables, with or without statistics, it is worth trying to see if we can be a bit more clever.

One simple way to measure a card's effectiveness in a deck is to compare the win rate in games where that card was drawn to the win rate in games where it was not drawn. If the win rate is higher in games where the card was drawn, logically it should be helping the overall win rate of the deck. After all, cards that are not drawn cannot affect the outcome of a game. I will refer to this approach as "win rate by card," since it is simple to calculate for every card in a decklist, and thereby evaluate many of them at once.

Win rate by card has its flaws. For one thing, it does not take into account matchups, so it is likely to be particularly sensitive to small sample sizes, since each card is only played in a fraction of games. For another, it compares the effectiveness of a card to other cards in the same deck, not to other potential cards that one might run in that slot. So, for example, when I ran this type of analysis on a data set of about 100 Demon Zoo games, I found that nearly every card in the list had a win rate statistically indistinguishable from the win rate in games without that card. This doesn't mean that every card in Zoo is mediocre, it just means that the deck is built to be consistent. There are no superstar cards in Zoo — it is a deck of team players. By contrast, Wild Growth and Savage Roar were major standouts when I did the same analysis on a Combo Druid data set, boosting the win rate significantly in games where those cards played a role.

I'm sure it isn't news to anybody that the strength of Combo Druid rests heavily on the ability to draw those two cards. While we haven't yet learned anything new about the cards, it does teach us about Win Rate by Card: WRC shows when a card is a star player in a deck. So if you were testing an unusual card in the role of a big threat, WRC would be a good way to show that it is working well. This is what I used it for in my article on Drakonid Crusher. In that Dragon Combo Druid deck, Drakonid Crusher was one of a few cards with standout win rates compared to the rest. The other three were Wild Growth, Savage Roar, and Dr. Boom. While I did not go into mathematical details in that article, the statistical analysis that I did while writing it gave me confidence in my claims about the worth of the card.

If WRC can confirm that a card is a star player, can it show that card is a weak link? Well, maybe. After initially devising this method of analysis, I started routinely applying it to any deck that I played for more than 30 or 40 games within a few days. One thing I noticed was that in most decks, Kezan Mystic underperformed significantly compared to how it felt. I think it is fair to say that if a tech card with few synergies in the deck produces a win rate lower than games where that card is not played, it is bringing down the deck as a whole and should not be run. WRC can therefore serve as a check on the psychological tendency to remember big winning plays over boring, grindy losses.

When it comes to cards that fit into the gameplan of a deck in more integral ways, though, WRC can be misleading. When I ran the analysis on a Midrange Hunter data set, I found that several cards were underperforming: Houndmaster, Unleash the Hounds, Knife Juggler, and Quickshot (all 2-ofs), and Ironbeak Owl (a 1-of). When I experimented with substitutions for those cards, however, I found that if I changed more than 2, suddenly standout cards like Animal Companion looked mediocre. What happened? The optimistic interpretation is that whatever substitution I tried made the deck more consistent, so the good cards stayed good, but the overall win rate of the deck improved.

The other interpretation is that the lackluster cards played some role in the success of the star cards, and without them the gameplan of the deck started to break down. To tell the difference, you would need to compare overall win rates with different card choices — back to the brute force technique we looked at first, with all its shortcomings.

Statistical Significance

Up to now, I have been talking about comparing win rates, but it's not enough to just look at the numbers and see if one is higher than the other. After all, it might be that there is really no difference between the win rates when a card was drawn or not, and it just looks that way because of random variation. What we need is to do a test for statistical significance, to make sure that we are looking at real differences is win rates, not just getting tricked by random noise.

The Python program I wrote to do my analysis used a T-test to calculate a p-value for each win rate comparison. My understanding is that a p-value represents the probability that two data sets are actually samples of the same phenomenon — i.e. the probability that they are different only because of random noise. In the sciences, p < 0.05, or a 5% chance that there is no real effect, is generally regarded as the cutoff value for statistical significance. Some fields use 0.1, while others use 0.01. I chose to use 0.1 as my cutoff value, since my sample sizes were small, but I paid extra attention to effects with p < 0.05. Note that a 0.05 p-value does not mean that there is a 95% chance that the observed effect is real — the difference between two data sets might be wrong in all sorts of ways.

For example, it might be that Wild Growth actually makes Combo Druid win less than average, and the observed effect of an increased win rate was just random variance. The p-value only takes into account the probability that Wild Growth doesn't affect the win rate at all. So, while it is a useful proxy to judge the statistical validity of an observation, it does not directly tell us what we care about, namely whether our specific hypothesis is true.

It has been suggested to me that a T-test/p-value is not the best way to measure statistical significance for WRC. Again, I am not an expert on statistics. There may indeed be a better technique, and I hope others will improve upon my methods and share their work. The main points I want to make are that we all really ought to be using some sort of test of statistical significance any time we compare Hearthstone data, and that we should be careful to understand what those tests do and do not tell us about the data.

Making the Most of the Data You Have

Ideally, WRC would use data including every card drawn or put into play in every game, and the outcome of those games, since that would include every game where that card could have affected the outcome, even if just by sitting dead in hand for the entire game. Unfortunately, Track-o-bot, the software I use to record my games, only tracks cards played from hand, so it captures neither cards put into play by effects like Mad Scientist or Deathlord, nor cards drawn but never played. This causes a few potential problems. First, it means that secrets will tend to be undervalued by WRC, since you ideally don't want to play them from hand. (Mad Scientist ought to look good though.) Second, it means that late game cards will tend to look better than they should, because they only get played in games where you survived long enough to afford them.

For my Drakonid Crusher article, I was mainly concerned with the second problem, since Drakonid Crusher is fairly expensive, and its ability means that you tend to wait to play it. My initial analysis of its effect on the win rate in games where it was played made the card look very good, but I needed to account for the possibility that it was bringing down the win rate by languishing in hand as much as it was helping the win rate by being played on the board. To do that, I made a list of other cards in the deck that served a comparable function to Drakonid Crusher — Azure Drake, Hungry Dragon (dragons), Sylvanas Windrunner (a hefty 6-drop), and Force of Nature (a finisher card).

I then compared the win rate in games where Crusher was not played to the win rate in which each of those cards was played. The idea is to assume that in every single game where Crusher was not played, it sat dead in hand, and that if it had been one of the substitute cards, it would have been played instead. Obviously that assumption is ludicrously optimistic, but if the comparison were to show no statistical difference, even based on that assumption, we should be able to conclude that Crusher did not have a negative effect on the win rate in games where it wasn't played. No statistical difference was in fact what I found, giving me more confidence in the merits of Drakonid Crusher in that deck.

Obviously, this type of trick is inferior to having data on cards drawn in the first place, since it relies on picking reasonable substitutes. It also assumes that the win rate for a given substitute card in the deck would be statistically indistinguishable for the win rate if a second copy of that card were substituted for Drakonid Crusher or whatever other experimental card. It is better than nothing, however, and it squeezes a little more out of the data from a single deck list, without having to compare data from two slightly different lists. Since the built in assumption about the experimental card being dead in hand is so extreme, hopefully that compensates for any messiness in the analysis that follows from it.

Some Interesting Things I Learned

I thought I would share some of the most interesting tidbits from my statistical explorations. I already mentioned that almost every card in Zoo is boring according to WRC. The one really interesting thing I noticed about the Zoo data was that games in which the hero power was used had a much lower win rate than games where I never tapped. That is perhaps similarly obvious to the power of Wild Growth and Savage Roar, because it indicates that you tend to win more when you draw a perfect curve, but it helped me inform my Zoo play to maximize what I could do with the cards in my hand.

Handlock proved to be a deck with many superstars. Antique Healbot, Mountain Giant, and Emperor Thaurissan all showed massively higher win rates for games where they were played. In Combo Druid, by contrast, I thought it was interesting that Emperor Thaurissan, commonly believed to be overpowered in Druid, was merely average. Dr. Boom, on the other hand, showed a moderately higher than average win rate in every deck where I analyzed data, and elevated by about the same amount. This would seem to confirm the popular notion about Dr. Boom's power independent of the deck it fits in.

Concluding Thoughts

Win Rate by Card is a method that can show some interesting things about the relative power of cards in a deck in relation to each other. In particular, it can help confirm that experimental big threats are working out, and reality check the effectiveness of tech cards. WRC is, however, I hope, only the first foray into the statistical wilderness where card-by-card analysis is concerned.

I would be thrilled to share the details of my work so far with anyone curious about investigating further. All the data from my games is available at http://goo.gl/qcD3sX. If you want to use my Python code, feel free to send me a tweet @Shevvek or drop by my stream (twitch.tv/shevek18).